First – thanks for the excellent articles — they really helped me deal with the fun of installing SQL Server 2008 on a Windows 2008 R2 cluster. My question is about the binding order of the network adapters in the Windows Cluster install.

This is a step-by-step guide on how to set up a failover cluster in Windows Server 2008R2, in a test lab. A cluster is a group of independent computers that work together to increase the availability of applications and services, such as file server service or print server service. Physical cables and software connect the clustered servers (called nodes) so that if one fails another can take its place.

To setup a failover cluster you need to have certain requirements met: » The nodes in the cluster must have at least two NICs each. One for the production environment, and one for heartbeat signal between cluster nodes. » The nodes in the cluster must have access to shared storage, be it iSCSI, SAN, NAS etc. » An AD domain, where the nodes must be members of the same domain.

» There are also other, but basically you just need a user account with administrative privileges on the nodes, and Create Computer objects and Read All Properties permissions in the container/OU that is used for computer accounts in the domain. Software you will need to perform this exercise » (or your virtualization Product of Choice) » » 1. Installation of VirtualBox and Virtual Machines 1.1 Download and install VirtualBox, the installation procedure is quite straightforward, so I’m not going to write in details about that. Now create three virtual machines, with 1GB ram and 20GB disk each. Server01: Domain Controller, will need 2 NICs Server02: Cluster node, will need 3 NICs Server03: Cluster node, will need 3 NICs Give Server02 and Server03 three NICs each, while Server01 will only have two. The NICs will serve the following purpose; Production – The clients will connect to the cluster through this NIC iSCSI – The nodes of the cluster will connect to the shared storage on Server01 through this NIC Heartbeat – The heartbeat signal between the two nodes will be sent through this NIC 1.2 Mount the Server 2008R2 ISO on the three virtual machines, and install Server 2008R2 Enterprise edition (Full Installation).

Failover Clustering feature is included in Enterprise and Datacenter editions of 2008R2 only. You can install Standard Edition on Server01, if you like, it does not make a difference. 1.3 Rename the three computers to Server01, Server02 and Server03 in Windows. Then give them the following IP addresses. Nic3, ip = 192.168.2.30, subnetmask = 255.255.255.0 1.4 Install the Guest Additions for VirtualBox, and restart the servers. Installation of Active Directory 2.1 Install Active Directory on Server01. 2.2 On Server01, open properties of NIC2 (the one designated to iSCSI), then browse to properties of ipv4, click Advanced, make these changes 2.3 On Server 01, click Start → Administrative Tools → DNS → right-click Server01 → Properties, and then make these changes 2.4 Join Server02 and Server03 to the domain.

Installation of StarWind iSCSI SAN Free Edition 3.1 Install StarWind iSCSI SAN Free Edition on Server01. You will use it to create iSCSI targets, and shared storage. Each service/application in the cluster will require its own shared storage. 3.2 After having created a target and storage on Server01, connect Server02 and Server03 to the target and the storage. Remember to use the 192.168.1.10 ip address of Server01 to connect to the iSCSI target. 3.3 Initialize the disk, bring it online and create a single volume, formatted with NTFS on Server02 only.

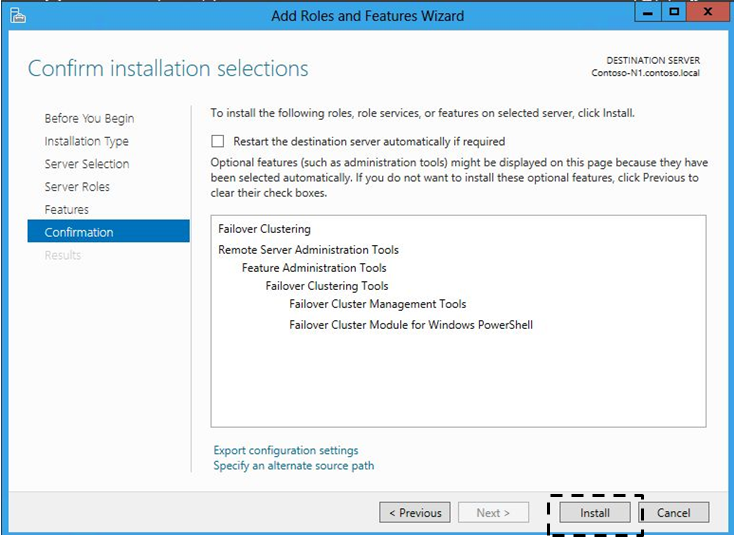

Here I have created a volume labeled Shared Storage, and assigned it the drive letter E: on Server02. Installation of Failover Clustering Feature Install the Failover Clustering feature on Server02 and Server03. You can either create a new domain account (remember the permissions it needs), or use the built-in Administrator account of the domain, to log on to the servers. 4.1 Start Server Manager → Features → Add Features → Choose Failover Clustering → Next → Install → Close 5. Validate and create the cluster 5.1 Click Start → Administrative Tools → Failover Cluster Manager 5.2 Right-click Failover Cluster Manager → Validate a Configuration 5.3 This will start the Validate a Configuration Wizard, click next 5.4 Click Browse 5.5 Write Server02;Server03, and then click Check Names, click OK 5.6 Click Next 5.7 Click Next → Next, the wizard will run all tests 5.8 When all tests are finished, you will be presented with a report. You can view the report, by clicking View Report (obviously) As you can see, I received some warnings on the network configuration of the servers. If you click the Network link in the report, you can see a description of the warning.

Since this is not a production environment, but just a test lab, you can easily ignore all such warnings of trivial matter. The other warning you will receive is about drivers being unsigned.

Once again, just ignore warnings of such trivial matters. All validation reports will be saved at%systemroot% Cluster Reports, so you can view the reports any time you like. 5.9 Click Create the cluster now using the validated nodes 5.10 The Create Cluster Wizard starts, click Next 5.11 Type the name you want to assign to the cluster, and then give it an IP address. As you remember, Nic1 was for the production environment, therefore untick the checkmark for the other two Nics and only assign the cluster an address in the 192.168.0.0/24 segment, such as 192.168.0.50 for example. Click Next twice, and the cluster will be installed. 5.12 Click Finish on the Summary page, and you are presented with the cluster you just created 6. Configure Quorum Type on the Cluster 6.1 As you can see in the previous screenshot, the Quorum Configuration is Node and Disk Majority. Since we currently have only one shared disk, we can not use this Quorum configuration, because that disk is being used as Quorum disk.

So lets just change it to Node and File Share Majority. 6.2 First we must create a file share on Server01, and give it the appropriate share and NTFS permissions. On Server01 create a folder called Cluster01, right-click it and choose properties. On the Sharing tab, click Advanced Sharing, choose to share the folder and click Permissions. Click Add 6.3 Click Object Types 6.4 Tick off for Computers 6.5 Write Cluster01, click OK 6.6 Give the Cluster01 Virtual Computer Object, Full Control permissions. Click OK twice 6.7 Now choose the Security tab, and give the Cluster01 Virtual Computer Object, Full control permissions here as well 6.8 On Server02, in Failover Cluster Manager, right-click Cluster01, choose More Actions, and then choose Configure Cluster Quorum Settings 6.9 This will start the Configure Cluster Quorum Wizard, click Next 6.10 Choose Node and File Share Majority 6.11 Write the path to the share we created in step 6.2, or browse to it, then click Next, Next again, and Finish.

Configure Service or Application 7.1 For this test lab’s purpose, lets configure the File Server service on the cluster. But before we can do that, we need to add the File Services role on both nodes. 7.2 Start Server Manager - Roles - Add Roles - Add the File Services role - On Role Services, choose File Server and File Server Resource Manager - Next - Next - Install 7.3 Now that the File Services role has been added on both nodes, head back to Failover Cluster Manager 7.4 In Failover Cluster Manager, Right-click Services and applications, and choose Configure a Service or Application 7.5 In the Wizards first page, click Next 7.6 Choose File Server, and then click Next 7.7 Give your new File Server a name and an IP address. This is the name clients will use to connect to the file server 7.8 Select Available shared storage (this is the storage we set up in Starwind iSCSI SAN). Next - Next - Finish 7.9 And thats it, you now have a clustered file server clients can connect to, and which will automatically failover to the second node, if the first node fails. 7.10 If you start AD Users and Computer, you will see two virtual computer objects that have been created for Cluster01, and Fileserver01 Additional Resources.

Configuring Volume Shadow Copy on Windows Server 2008 R2 Configuring Windows Server 2008 R2 DHCP Servers BUYWINSERV2008R2 This chapter of Windows Server 2008 R2 Essentials covers a concept referred to as Network Load Balancing (NLB) clustering using Windows Server 2008 R2, a key tool for creating scalable and fault tolerant network server environments. In previous versions of Windows Server, cluster configuration was seen by many as something of a 'black art'. As this chapter will hopefully demonstrate, building clusters with Windows Server 2008 R2 is arguably now about as easy as Microsoft could possibly make it. An Overview of Network Load Balancing Clusters Network Load balancing provides failover and high scalability for Internet Protocol (IP) based services providing support for Transmission Control Protocol (TCP), User Datagram Protocol (UDP) and General Routing Encapsulation (GRE) traffic. Each server in a cluster is referred to as a node.

Network Load Balance Clustering support varies between the different editions of Windows Server 2008 R2 with support for clusters containing 2 up to a maximum of 32 nodes. Network Load Balancing assigns a virtual IP address to the cluster. When a client request arrives at the cluster this virtual IP address is mapped to the real address of a specific node in the cluster based on configuration settings and server availability. When a server fails, traffic is diverted to another server in the cluster. When the failed node is brought back online it is then re-assigned a share of the load. From a user perspective the load balanced cluster appears to all intents and purposes as a single server represented by one or more virtual IP addresses. The failure of a node in a cluster is detected by the absence of heartbeats from that node.

If a node fails to transmit a heartbeat packet for a designated period of time, that node is assumed to have failed and the remaining nodes takeover the work load of the failed server. Nodes in a Network Load Balanced cluster typically do not share data, instead each storing a local copy of data. Under such a scenario the cluster is referred to as a farm. This approach is ideal for load balancing of web servers where the same static web site data is stored on each node. In an alternative configuring, referred to as a pack the nodes in the cluster all access shared data. In this scenario the data is partitioned such that each node in the cluster is responsible for accessing different parts of the shared data.

This is commonly used with database servers, with each node having access to different parts of the database data with no overlap (a concept also known as shared nothing). Network Load Balancing Models Windows Server 2008 R2 Network Load Balancing clustering can be configured using either one or two network adapters in each node, although for maximum performance two adapters are recommended. In such a configuration one adapter is used for communication between cluster nodes (the cluster adaptor) and the other for communication with the outside network (the dedicated adapter). The four basic Network Load Balancing modes are as follows:. Unicast with Single Network Adapter - The MAC address of network adapter is disabled and the cluster MAC address is used. Traffic is received by all nodes in the cluster and filtered by the NLB driver.

Nodes in the cluster are able to communicate with addresses outside the cluster subnet, but node to node communication within cluster subnet is not possible. Unicast with Multiple Network Adapters - The MAC address of the network adapter is disabled and the cluster MAC address is used. Traffic is received by all nodes in the cluster and filtered by the NLB driver. Nodes within the cluster are able to communicate with each other within the cluster subnet and also with addresses outside the subnet.

Multicast with Single Network Adapters - Both network adapter and cluster MAC addresses are enabled. Nodes within the cluster are able to communicate with each other within the cluster subnet and also with addresses outside the subnet. Not recommended where port rules are configured to direct significant levels of traffic to specific cluster nodes. Multicast with Multiple Network Adapters - Both network adapter and cluster MAC addresses are enabled.

Nodes within the cluster are able to communicate with each other within the cluster subnet and also with addresses outside the subnet. This is the ideal configuration for environments where there are significant levels of traffic directed to specific cluster nodes.

Configuring Port and Client Affinity WIN28BOX Network traffic arrives on one of a number of different ports (for example FTP traffic uses ports 20 and 21 while HTTP traffic uses port 80). Network Load Balancing may be configured on a port by port basis or range of ports.

For each port three options are available to control the forwarding of the traffic:. Single Host - Traffic to the designated port is forward to a single node in the cluster.

Multiple Hosts - Traffic to the designated port is distributed between the nodes in the cluster. Disabled - No filtering is performed.

Many client/server communications take place within a session. As such, the server application will typically maintain some form of session state during the client server transaction. Whilst this is not a problem in the case of a Single Host configuration described above, clearly problems may arise if a client is diverted to a different cluster node partway through a session since the new server will not have access to the session state. Windows Server 2008 R2 Network Load Balancing addresses this issue by providing a number of client affinity configuration options.

Client affinity involves the tracking of both destination port and source IP address information to optionally ensure that all traffic to a specific port from a client is directed to the same server in the cluster. The available Client affinity settings are as follows:. Single - Requests from a single source IP address are directed to the same cluster node.

Network - Requests originating from within the same Class C network address range are directed to the same cluster node. None - No client affinity. Requests are directed to nodes regardless of previous assignments. Installing the Network Load Balancing Feature The first step in building a load balanced cluster is to install the Network Load Balancing feature on each server which is to become a member of the cluster. This can be achieved by starting the Server Manager tool, selecting Features from the left panel and then clicking on the Add Features link. In the list of available features, select Network Load Balancing and click on the Next button followed by Install. Once installation is complete both the graphical Network Load Balancing Manager and the command line NLB Cluster Control Utility (named nlbmgr.exe and nlb.exe respectively) will be installed ready for use.

Building a Windows Server 2008 R2 Network Load Balanced Cluster Network Load Balanced clusters are built using the Network Load Balancing Manager which may be launched from the Start - All Programs - Administrative Tools menu or from a command prompt by executing nlbmgr. Once loaded, the manager will appear as shown in the following figure: To pre-configure the account and password credentials to be used on each node in the cluster, select the Options - Credentials menu option and enter an account and password keeping in mind that the account used must be a member of the administrators group. If default credentials are configured, the user will be prompted for account and password information each time a connection to a cluster node is established. To begin the cluster creation process, right click on the Network Load Balancing Clusters entry in the left panel of the manager window and select the New Cluster menu option. This will display the New Cluster connection dialog. In this dialog, enter either the name or IP address of the first server to be included in the load balanced cluster and press the Connect button to establish a connection to that server.

If the connection is successful the first server will be listed as shown below. Clicking Next will display a warning that DHCP will be turned off for the network adapter of the specified host and that any necessary gateway information will need to be configured manually using the server's network connection properties dialog (accessible from the Control Panel). Subsequently, the Host Parameters screen will appear as shown below: The Priority (unique host ID) is a number between 1 and 32 and serves two purposes. Firstly, the number provides a unique ID within the cluster to distinguish the server from other nodes.

Secondly, it specifies the priority order of the cluster. The cluster node with the lowest priority is assigned to handle all traffic that is not covered by a port rule. All servers joining a cluster must have a unique ID. A new server attempting to join a cluster with a conflicting ID will be denied membership. The Dedicated IP addresses fields are used when a single network adapter is used for both communication between cluster nodes and external network traffic. It is used to specify the host's unique IP address, which is used for non-cluster network traffic (i.e.

Direct connections to the specific server from outside the cluster without being affected by the Network Load Balancing). This must be a fixed IP address and not a DHCP address and as such should also be entered into the network properties dialog of the node. To configure dedicated IP addresses, click on the Add. Button and enter the IP address and subnet mask (for example 255.255.255.0).

The Initial host state setting controls the initial state of the node when the system is started. The default is for the server to start as an active participant in the cluster. Alternative options are Suspend and Stop.

Clicking Next displays the Cluster IP addresses screen. These are the virtual IP addresses by which the cluster will be accessible on the network. These IP addresses are shared by all nodes in the cluster and a cluster may have multiple virtual IP addresses. Once the cluster IP addresses are specified, click on Next to proceed to the Cluster Parameters screen: On this screen, enter the full internet name of the cluster (for example cluster.techotopia.com) and choose the appropriate Cluster operation mode.

As outlined earlier in this chapter, the options here consist of Unicast, Multicast and IGMP multicast. IGMP multicast IPv4 addresses are limited to the Class D address range. Once selection is complete, click Next to proceed to the Port Rules screen: By default all TCP and UDP traffic on all ports (0 through 65535) is balanced across all nodes in the cluster with single client affinity. To define new or modify existing rules, for example to direct traffic to a particular port to a specific cluster node, use the Add and Edit buttons. Once port rules have been defined, click Finish to complete this phase of the configuration process. The Network Load Balancing Manager will now list the new cluster containing the single node as illustrated below: With the cluster now configured and running, the next step is to add additional nodes to the cluster. Adding and Removing Network Load Balanced Cluster Nodes Before adding a new host to a cluster it is first necessary to install the Network Load Balancing feature on the new server as outlined previously in this chapter.

To add additional nodes to a Network Balanced Cluster, right click on the cluster in the left hand panel of the Network Load Balancing Manager and select Add Host To Cluster. If no cluster is currently listed, right click on Network Load Balancing Manager entry and select Connect to Existing. In the connection dialog enter the name or IP address of a node in the cluster (or the IP address of the cluster itself) and click on Connect. Once the connection is established, click on Finish to return to the manager interface where the cluster will be listed in the left hand panel using the cluster's full internet name.

To add a node, right click on the cluster name and select Add Host to Cluster. In the resulting dialog enter the name or IP address of the host to be added as a new cluster node and click on Connect. Once the connection is established, proceed to the next screen and specify the unique ID/ priority for the new node, together with dedicated IP addresses and the initial host state. Click Next to change any of the cluster port rule settings. Once finished, the new host will be listed in the manager screen with a status of Pending, followed by Converging and finally Converged. Existing nodes in a cluster may be either suspended or removed. In either case, right click on the node in the Network Load Balancing Manager and select either Suspend or Delete Host.

A suspended node may be resumed by selecting Resume from the same menu. A deleted node must be added to the cluster once again using the steps outlined above.

RSS Feed

RSS Feed